Part 1 - Introduction

Today’s article provides an introduction to stored procedures in Snowflake, which can be used to create modular code with complex business logic by combining SQL statements with procedural logic. The article discusses the benefits of stored procedures, including error handling and dynamically creating SQL statements, and provides examples of how to create and use stored procedures in Snowflake.

Part 2 - What are Snowflake Stored Procedures?

Snowflake stored procedures can be thought of as a function that enables users to create modular code with complex business logic by combining multiple SQL statements with procedural logic. They are used for data migration and validation while handling exceptions. Benefits of stored procedures include procedural logic such as branching and looping, error handling, dynamically creating SQL statements to execute, and executing code with the privileges of the stored procedure creator. A Stored Procedure is created with a CREATE PROCEDURE command and is executed with a CALL command. A Stored Procedure uses JavaScript for logic and control and SQL is used to call the JavaScript API.

Part 3 - Benefits of Stored Procedures include:

- Procedural logic such as branching and looping which straight SQL does not support.

- Error handling.

- Dynamically creating SQL statements to execute.

- Executing code with the privileges of the stored procedures creator. This allows stored procedure owners to delegate power to perform operations to users who otherwise could not.

A Stored Procedure is created with a CREATE PROCEDURE command and is executed with a CALL command. The result is returned as a single value. A Stored Procedure uses JavaScript for logic and control and SQL is used to call the JavaScript API.

Part 4 - Stored Procedure Examples:

Let’s say we want to insert a row using a stored procedure.

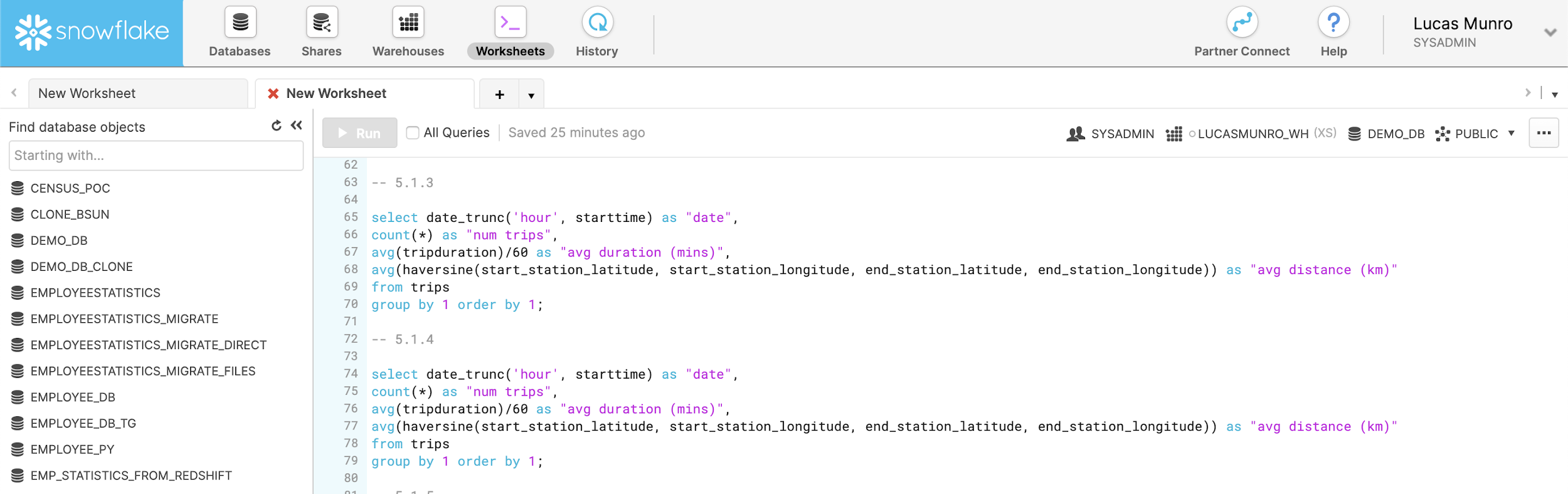

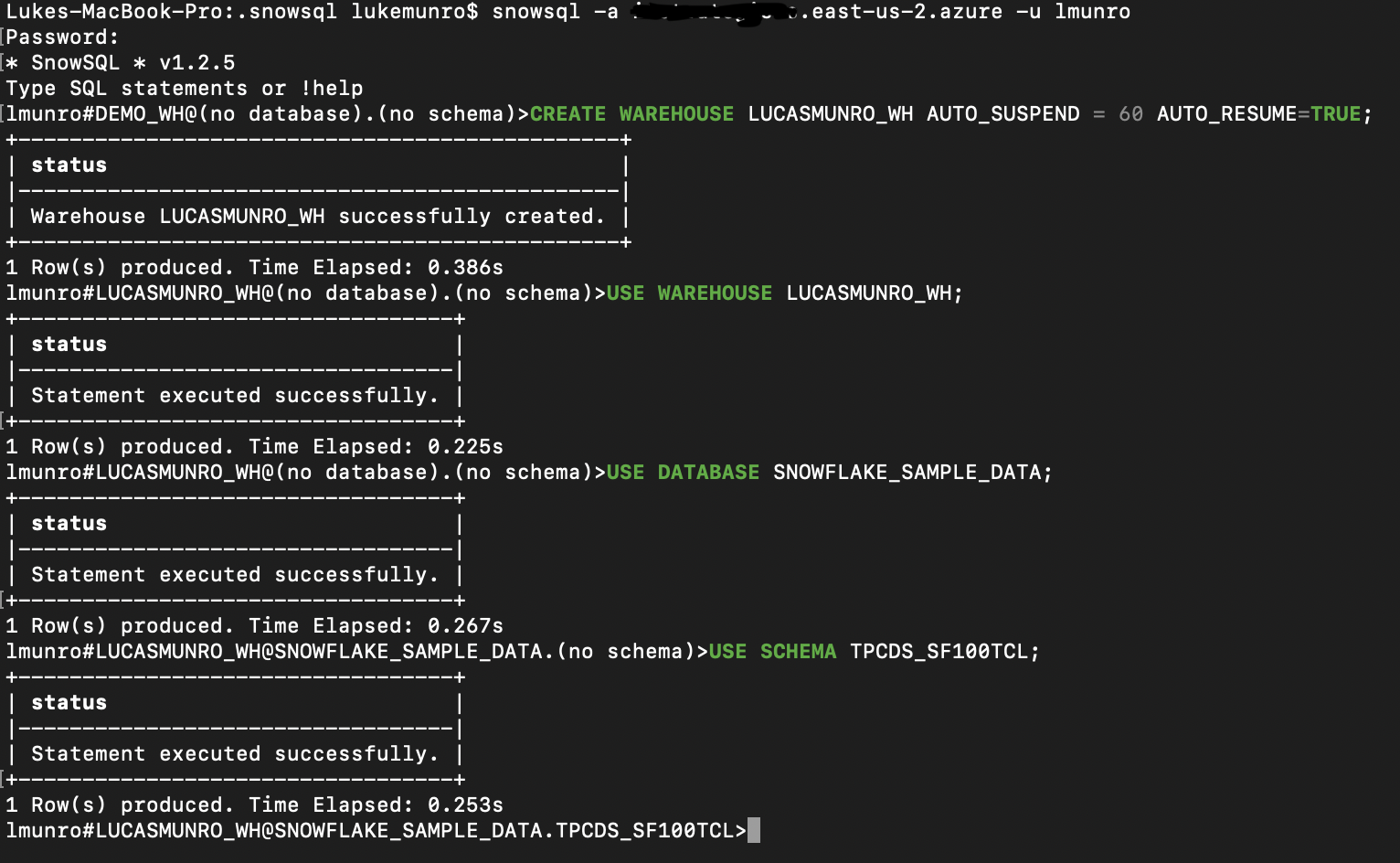

First, let’s create a database and table to use.

USE DATABASE DEMO_DB;

CREATE OR REPLACE TABLE Employee(emp_id INT, emp_name varchar,emp_address varchar);

create sequence if not exists emp_id;

Now that we have that setup, let’s create our first stored procedure.

CREATE OR REPLACE PROCEDURE employee_insert(name varchar, address varchar)

RETURNS VARCHAR

LANGUAGE JAVASCRIPT

AS

$$

var command = "INSERT INTO Employee (emp_id, emp_name, emp_address) VALUES (emp_id.nextval, '"+NAME+"','"+ADDRESS+"')";

var cmd_dict = {sqlText: command};

var stmt = snowflake.createStatement(cmd_dict);

var rs = stmt.execute();

return 'success';

$$;

In the first section, we define the SPs name, parameters, return value, and language. Then between the $$, we write the actual code. Now that everything is setup, we can use the SP with the CALL command like this:

CALL employee_insert('Name','Location');

Let’s give this a try and see what happens!

Awesome! That worked!

Make sure to use a database that exists in your console if you’re replicating this.

However, a simple insert isn’t particularly useful for a Stored Procedure. We’d be better off just using pure SQL. Let’s look at a more realistic example that better demonstrates the power of Stored Procedures.

Taking it Further

Let’s imagine the following scenario. You receive an employee’s data in JSON. You want to store it on the table but you have to ensure the following first:

- Employee name cannot be empty or NULL

- Employee name must be unique

- Employee address cannot be NULL

It is possible to do all of this using a single stored procedure. However, since we have two distinct tasks — validation and insert — it’s better practice to break them up into 2 separate procedures. We’ll then call the validation procedure from inside the insert procedure. We’ll be utilizing Snowflake’s variant type to return a json object. Let’s take a look at our validate procedure.

Validate Procedure

create or replace procedure employee_validate_json(INPUT VARCHAR)

RETURNS VARIANT NOT NULL

LANGUAGE JAVASCRIPT

AS

$$

var json_row = {};

var error_count = 0;

try {

var employee_data = JSON.parse(INPUT);

var employee_id = employee_data.employee_id;

var employee_name = employee_data.employee_name;

var employee_address = employee_data.employee_address;

if (!employee_name) {

json_row["employee_name"] = "employee name cannot be empty or null.";

error_count = 1;

} else {

var command = "select count(*) from Employee where emp_name='"+ employee_name + "'";

var stmt = snowflake.createStatement({sqlText: command});

var res = stmt.execute();

res.next();

row_count = res.getColumnValue(1);

// check for duplicate

if (row_count > 0) {

json_row["employee_name"] = "employee name already exists in table.";

error_count = 1;

}

}

if (employee_address == null || employee_address == undefined) {

json_row["employee_address"] = "employee address should not be NULL.";

error_count = 1;

}

json_row["is_error"] = error_count;

} catch (err) {

json_row["Exception"] = "Exception: " + err;

json_row["is_error"] = 1;

}

return json_row;

$$;

The stored procedure will either return is_error = 0 if there is nothing wrong with our JSON or return is_error = 1 with the appropriate error message.

Successful run:

call EMPLOYEE_VALIDATE_JSON('{

"employee_name": "Lucas", "employee_address": "Los Angeles"

}');

Result:

{ "is_error": 0 }

Error run:

call EMPLOYEE_VALIDATE_JSON('{

"employee_name": "", "employee_address": "Los Angeles"

}');

Result:

{"employee_name": "employee name cannot be empty or null.", "is_error": 1 }

Now that we have our validation working. Let’s dive into our insert procedure!

Insert Procedure

Our insert procedure is going to call our validate procedure and check for any errors. If it finds any, it will return them. If not, it will attempt to insert the data into the table. Also returning a json upon completion.

create or replace procedure employee_insert_json(INPUT VARCHAR)

RETURNS VARIANT NOT NULL

LANGUAGE JAVASCRIPT

AS

$$

var json_row = {};

var message = {};

var detail = {};

var result = '';

var error_count = 0;

try {

var employee_data = JSON.parse(INPUT);

var employee_name = employee_data.employee_name;

var employee_address = employee_data.employee_address;

var command_validate = "call employee_validate_json('" + INPUT + "')";

var cmd_dict_validate = {sqlText: command_validate};

var stmt_validate = snowflake.createStatement(cmd_dict_validate);

var rs_validate = stmt_validate.execute();

rs_validate.next();

var validate_result = rs_validate.getColumnValue(1);

if (validate_result.is_error > 0) {

return validate_result;

} else {

var command = "INSERT INTO employee(emp_id,emp_name,emp_address) VALUES(emp_id.nextval, '" + employee_name + "','" + employee_address + "')";

var cmd_dict = {sqlText: command};

var stmt = snowflake.createStatement(cmd_dict);

var rs = stmt.execute();

json_row["message"] = "successfully inserted employee";

}

} catch (err) {

json_row["exception"] = err;

error_count = 1;

}

json_row["is_error"] = error_count;

return json_row;

$$;

Let’s take a look at some sample runs.

call EMPLOYEE_INSERT_JSON('{

"employee_name": "Lucas", "employee_address": "Los Angeles"

}');

Result:

{ "is_error": 0, "message": "successfully inserted employee" }

Nice! That worked but let’s see what happens if we try to run the same command again.

call EMPLOYEE_INSERT_JSON('{

"employee_name": "Lucas", "employee_address": "Los Angeles"

}');

Result:

{"employee_name": "employee name already exists in table.", "is_error": 1 }

When we try it again our validate procedure finds that the employee name already exists!

Part 5 - Try Snoptimizer today!

Snoptimizer quickly and automatically optimizes your Snowflake account for security, cost, and performance. It eliminates headaches and concerns about security risks and cost overruns across your Snowflake account.

Try Snoptimizer today. Sign up and schedule a personal demo with us!

Part 6 - Conclusion:

Stored procedures are a great way to streamline your Snowflake tasks. They can also be used to grant higher-level access to lower-level users in a defined manner. I hope this tutorial has helped you create or transfer your stored procedures to Snowflake.

If you're interested in trying out Snoptimizer, feel free to schedule a personal demo with us today.