The Power of Instantaneous Data Sharing - Updated

How awesome would it be to be able to share data more quickly instead of exporting it to some format like Excel and then emailing it out? I'm always looking for new ways to make sharing data faster and more easy. When I think back the past 20-30 years in tech I think of all sorts of data sharing tools and evolution of data. Do you remember VSAM files? Lotus Notes? SharePoint? Dropbox?

Find out more about all the benefits Snowflake has to offer you and your business. Sign up for a free proof of concept!

Frank Bell

July 27, 2018

(Continued... from https://www.linkedin.com/pulse/power-instantaneous-data-sharing-frank-bell/)

Over the past 20-30 years there have been tons and tons of investments made in BOTH people and technology in order to share data more effectively and quickly. We currently have millions of data analysts, data scientists, data engineers, data this and data that all over the world. Data is growing and growing and a huge part of our economy and our growth as a society. At the same time though the tools to share it never really were maturing that much until recently with Snowflake’s Data Sharing Functionality.

Before I explain how transformative this new “data sharing” or “logical data access” functionality is let’s take a step back and explain how “data sharing” worked before this.

Brief Tech History of Data Sharing. Here are some of the old and semi-new tools:

Good old fashioned physical media. (floppy disks, 3.5 inch disks, hard drives, USB drives, etc.)

Email. Probably still the best for smaller amounts of data and files. I’ve done it too. I need some super fast way to move a excel file with data from 1 computer to another fast. Email to the rescue.

SFTP/FTP. Secure File Transfer Protocol. File Transfer Protocol.

EDI (yuck) - Electronic Data Interchange - The business side of me has hives just thinking about expensive and crappy of a business solution this is. Companies spent millions creating EDI exchanges. The is a cumbersome and expensive process but at the time it was the accepted way to exchange data.

SCP. Secure Copy Protocol. Great command line tool for technical users.

APIs (Application Programming Interfaces). While APIs have been amazing and come a long way there still is technical friction with sharing data through them.

Dropbox, etc. Dropbox revolutionized the ease of sharing files mainly. It's still not really great for true data sharing.

Airdrop type functionality.

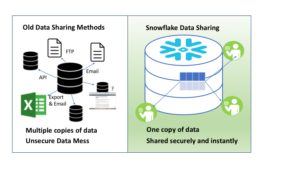

Let’s face it though, most of these are primitive and have a lot of friction especially for non-technical users. Even when they are slick like Airdrop they typically don’t work across platforms and are often limited in data sizes and to discrete files. All of these solutions above have a lot of limitations when you think of the friction to get quality “data” and “information” for analysis and use from one place to another its still relatively painful.

Enter Snowflake’s data sharing. With Snowflake they have created a concept of “data sharing” through a “data share” which makes larger structured and unstructured data sharing a lot easier and one of the biggest improvements is there is only ONE SOURCE OF DATA. Let me say it again, yes, that’s ONE SOURCE OF DATA. This isn’t your typical copying of data which creates all sorts of problems with data integrity and data governance. It's the same consistent data shared throughout your departments, organizations, or with customers.

The main point here is that there is true power in effective and fast data sharing. If you can make decisions faster than your competitors or you can help out your constituents with faster service than it makes your organization much better overall.

[/fusion_text][fusion_text columns="" column_min_width="" column_spacing="" rule_style="default" rule_size="" rule_color="" class="" id=""]

Also, it's just easy to do. With a very simple command you can share data to any other snowflake account. The only real catch is you do need a Snowflake account but this account you are only charged for what you use. For example, if you have a personal account that you don’t use very often then you are not charged anything per month except $40/TB of storage but if you don’t store anything you are not charged for that either and then the only charge would be compute (queries of someone else’s data share) which would be pretty inexpensive. For organizations with Big Data this cost is very reasonable compared to all the legacy solutions that were required in the past that are slower, more cumbersome, and more expensive.

What challenges does this solve today?

Cross Enterprise Sharing. (Let’s say you need to compare how different brands across websites are performing? Or you need to compare financials. You can easily share this data now with integrity across the enterprise and rollup and integrate different business units data as necessary.

Partner/Extranet Type Data Sharing. You can share data with much more speed and integrity with your partners with much less complexity than APIs require.

Data Provider Sharing. Data Providers that need to share data can reduce costs and friction by more easily sharing their data at the row level to different customers.

As things get more and more complex. (I mean is there really any corporation saving less data this year than last?) then we need to challenge ourselves to make things more simple. That is what Snowflake has done. I encourage you to take a look for yourself and try it out for free. We will be sending out some Data Sharing examples as well in the next few weeks so stay tuned.

Also, if you don’t believe me then look at all the reference case studies coming out in the last few months. Data Sharing has the power to transform companies, partners, and industries. Make sure you at least investigate it to make sure you are not left behind.

Here is a Data Sharing for Dummies Video for more information on the technology.

[/fusion_text][fusion_text columns="" column_min_width="" column_spacing="" rule_style="default" rule_size="" rule_color="" class="" id=""]